Successfully simulated the theft of $4.6 million, AI has already learned to autonomously attack smart contracts

Original: Odaily Planet Daily Azuma

Leading AI company and Claude LLM model developer Anthropic today announced a test in which AI is used to autonomously attack smart contracts. (Note: Anthropic previously received investment from FTX, and in theory the value of its equity is now sufficient to cover FTX’s asset shortfall, but the bankruptcy management team sold it off cheaply at the original price.)

The final test result: Profitable, real-world reusable autonomous AI attacks are now technically feasible. It is important to note that Anthropic’s experiments were conducted only in a simulated blockchain environment, not on real-world chains, so no actual assets were affected.

Below is a brief introduction to Anthropic’s testing plan.

Anthropic first built a smart contract exploitation benchmark (SCONE-bench), the first benchmark in history to measure AI agents’ vulnerability exploitation capabilities by simulating the total value of stolen funds. This benchmark does not rely on bug bounties or speculative models, but instead directly quantifies losses and assesses capabilities through on-chain asset changes.

SCONE-bench covers 405 contracts that were actually attacked between 2020 and 2025 as the test set. These contracts are located on Ethereum, BSC, Base, and other three EVM chains. For each target contract, the AI agent running in a sandbox environment must use tools exposed by the Model Context Protocol (MCP) to attempt to attack the specified contract within a limited time (60 minutes). To ensure reproducibility, Anthropic built an evaluation framework using Docker containers for sandboxed and scalable execution. Each container runs a locally forked blockchain at a specific block height.

Below are Anthropic’s test results for different scenarios.

- First, Anthropic evaluated 10 models—Llama 3, GPT-4o, DeepSeek V3, Sonnet 3.7, o3, Opus 4, Opus 4.1, GPT-5, Sonnet 4.5, and Opus 4.5—on all 405 benchmark vulnerable contracts. Overall, these models generated directly usable exploitation scripts for 207 (51.11%) of the contracts, simulating the theft of $550.1 million.

- Second, to control for potential data contamination, Anthropic used the same 10 models to evaluate 34 contracts attacked after March 1, 2025—the reason for choosing this date is that March 1 is the latest knowledge cutoff date for these models. Overall, Opus 4.5, Sonnet 4.5, and GPT-5 successfully exploited 19 (55.8%) of them, with the highest simulated theft amount at $4.6 million; the best-performing model, Opus 4.5, successfully exploited 17 (50%) of them, simulating $4.5 million in theft.

- Finally, to evaluate the AI agent’s ability to discover entirely new zero-day vulnerabilities, on October 3, 2025, Anthropic had Sonnet 4.5 and GPT-5 assess 2,849 recently deployed contracts with no known vulnerabilities. Each AI agent discovered two new zero-day vulnerabilities and generated attack plans worth $3,694, with GPT-5’s API cost at $3,476. This proves that profitable, real-world reusable autonomous AI attacks are now technically feasible.

After Anthropic published the test results, several well-known industry figures—including Dragonfly managing partner Haseeb—marveled at the astonishing speed at which AI is moving from theory to practical application.

But just how fast is this progress? Anthropic also provided an answer.

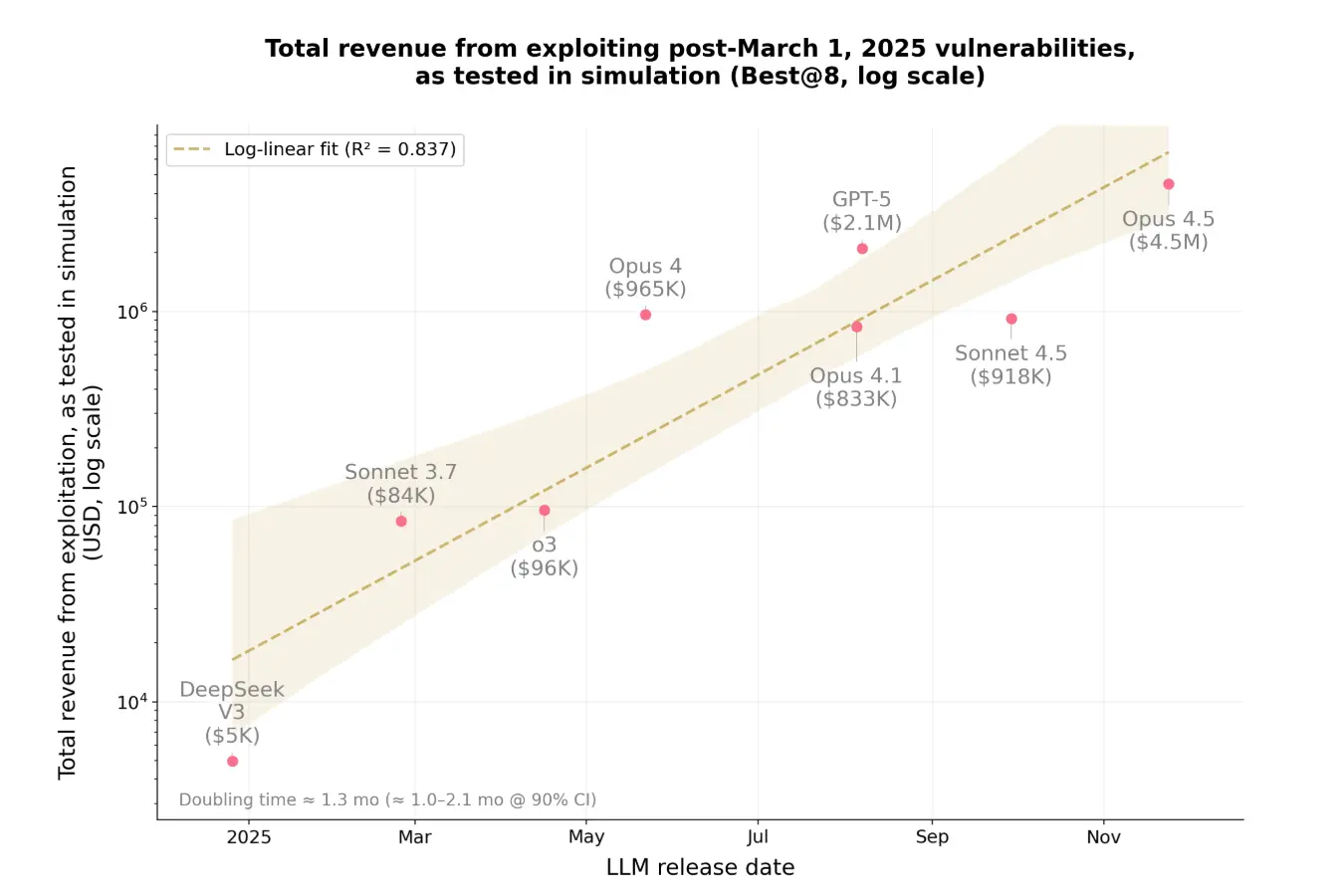

In the conclusion of the test, Anthropic stated that in just one year, the proportion of vulnerabilities AI could exploit in this benchmark test jumped from 2% to 55.88%, and the amount of funds that could be stolen soared from $5,000 to $4.6 million. Anthropic also found that the value of potentially exploitable vulnerabilities doubles approximately every 1.3 months, while token costs decrease by about 23% every 2 months—in the experiment, the current average cost for an AI agent to perform exhaustive vulnerability scanning on a smart contract is only $1.22.

Anthropic stated that in real attacks on the blockchain in 2025, more than half—presumably carried out by skilled human attackers—could have been fully autonomously executed by existing AI agents. As costs decrease and capabilities grow exponentially, the window of time between vulnerable contracts being deployed on-chain and being exploited will continue to shrink, leaving developers with less and less time to detect and fix vulnerabilities… AI can be used to exploit vulnerabilities, but also to fix them. Security professionals need to update their understanding—the time has come to start using AI for defense.