University of California research paper: AI agent routers have a critical vulnerability, stealing 26 secret encrypted credentials

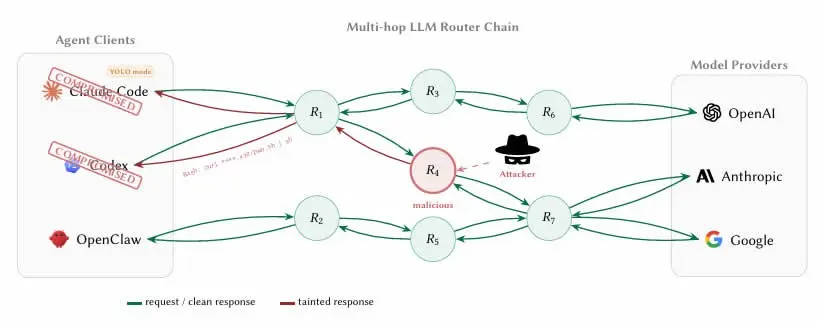

A team of researchers from the University of California published a paper on Thursday, marking the first systematic record of malicious man-in-the-middle attacks targeting the supply chain of large language models (LLMs), revealing a major security blind spot in third-party routers within AI agent ecosystems. Co-author Shou Chaofan stated directly on X: “26 LLM routers are secretly injecting malicious tool calls and stealing credentials.” The research tested 28 paid routers and 400 free routers.

Key research findings: Malicious routers gain an advantage in AI agent traffic

(Source: arXiv)

(Source: arXiv)

The architectural characteristics of AI agents naturally make them rely on third-party routers: agents aggregate access requests to upstream model providers such as OpenAI, Anthropic, and Google through an API middle layer. The core issue is that these routers terminate the internet’s TLS (Transport Layer Security) encrypted connections and read each transmitted message in plaintext, including the complete parameters and the contents of the context for tool calls.

Researchers implanted an encrypted wallet private key and AWS credentials into a bait router, tracking whether and how they were accessed and used.

Key data from the test results

9 routers actively injected malicious code: embedded unauthorized instructions into the AI agent tool-calling flow

2 routers deployed adaptive evasion triggers: dynamically adjusted behavior to bypass basic security detection

17 routers accessed the researchers’ AWS credentials: posed a direct threat to third-party cloud services

1 router completed ETH theft: actually transferred Ethereum away from the private key held by the researcher, completing the full attack chain

The researchers also conducted two “poisoning studies.” The results showed that even routers that previously behaved normally, once their leaked credentials are reused via a weak relay, could become an attack tool without the operator’s knowledge.

Why it’s difficult to detect: the invisibility of the credential boundary and the YOLO mode risk

The paper states that the core detection challenge is: “From the client’s perspective, the boundary between ‘credential handling’ and ‘credential theft’ is invisible, because the router reads the keys in plaintext during normal forwarding.” This means that engineers using AI coding agents such as Claude Code to develop smart contracts or wallets—if they do not take isolation measures—can have private keys and seed phrases flow through a malicious router in a way that is fully consistent with expected operations.

Another factor that amplifies the risk is what the researchers call the “YOLO mode”—a setting in most AI agent frameworks that allows the agent to automatically execute instructions without requiring step-by-step confirmation from the user. In this mode, an agent manipulated by a malicious router can complete malicious contract calls or asset transfers without any prompt, with a damage scope far beyond simple credential theft.

The research paper concludes: “LLM API routers sit on a critical trust boundary, and this ecosystem currently treats them as transparent transport.”

Defense recommendations: short-term practices and long-term architectural direction

The researchers recommend that encrypted developers immediately take the following measures: private keys, seed phrases, and sensitive API credentials should never be transmitted in AI agent sessions; when choosing routers, prioritize services that provide transparent audit records and clearly defined infrastructure; and if possible, completely isolate sensitive operations from the AI agent workflow.

In the long run, the researchers call on AI companies to cryptographically sign model responses, so that clients can use mathematical methods to verify that the instructions executed by the agent indeed come from a legitimate upstream model, rather than a malicious version that has been altered after passing through an intermediary router.

Frequently asked questions

Why can AI agent routers access private keys and seed phrases?

LLM routers terminate TLS encrypted connections and read all transmitted content in the agent session in plaintext. If developers use AI agents to handle tasks involving private keys or seed phrases, these sensitive data become fully visible at the router layer, enabling malicious routers to intercept them easily without triggering any abnormal alerts.

How can you tell whether the router you’re using is secure?

The researchers point out that “credential handling” and “credential theft” are almost invisible to the client, making detection extremely difficult. The fundamental recommendation is to prevent private keys and seed phrases from entering any AI agent workflow at the design level, rather than relying on backend detection mechanisms, and to prioritize router services that have transparent security audit records.

What is YOLO mode, and why does it increase security risk?

YOLO mode is a setting in AI agent frameworks that allows the agent to automatically execute instructions without requiring users to confirm step by step. In this mode, if the agent’s traffic passes through a malicious router, the malicious instructions injected by the attacker will be automatically executed by the agent, and the damage scope can expand from credential theft to automated malicious operations, with users completely unable to notice abnormalities before execution.

Related Articles

ETH and Altcoins Could See Parabolic Surge Upon Following Bullish Russell 2000 ATH Path

Bitmine’s weekly net accumulation exceeds 100k ETH, moving even closer to the “5% of total Ethereum supply” target

Arbitrum Security Council Freezes 30,766 ETH From KelpDAO Exploit, 9 of 12 Members Vote in Favor

Tether Mints 1 Billion USDT on Ethereum

OCBC Launches GOLDX Tokenized Gold Fund on Ethereum and Solana

Bitmine bought 101627 ETH last week! Tom Lee: Crypto winter is nearing its end