How does Livepeer operate? A detailed breakdown of video transcoding and AI video processing workflows

Video processing is a foundational element of internet infrastructure. Whether it's live streaming, short-form video, or AI-generated content, video files almost always require transcoding, compression, and multi-resolution adaptation to support different devices and network conditions. Traditional video platforms typically rely on centralized cloud services for these capabilities. However, as AI video and real-time generative media advance, the demand for GPU computing continues to grow—driving up the costs of video processing.

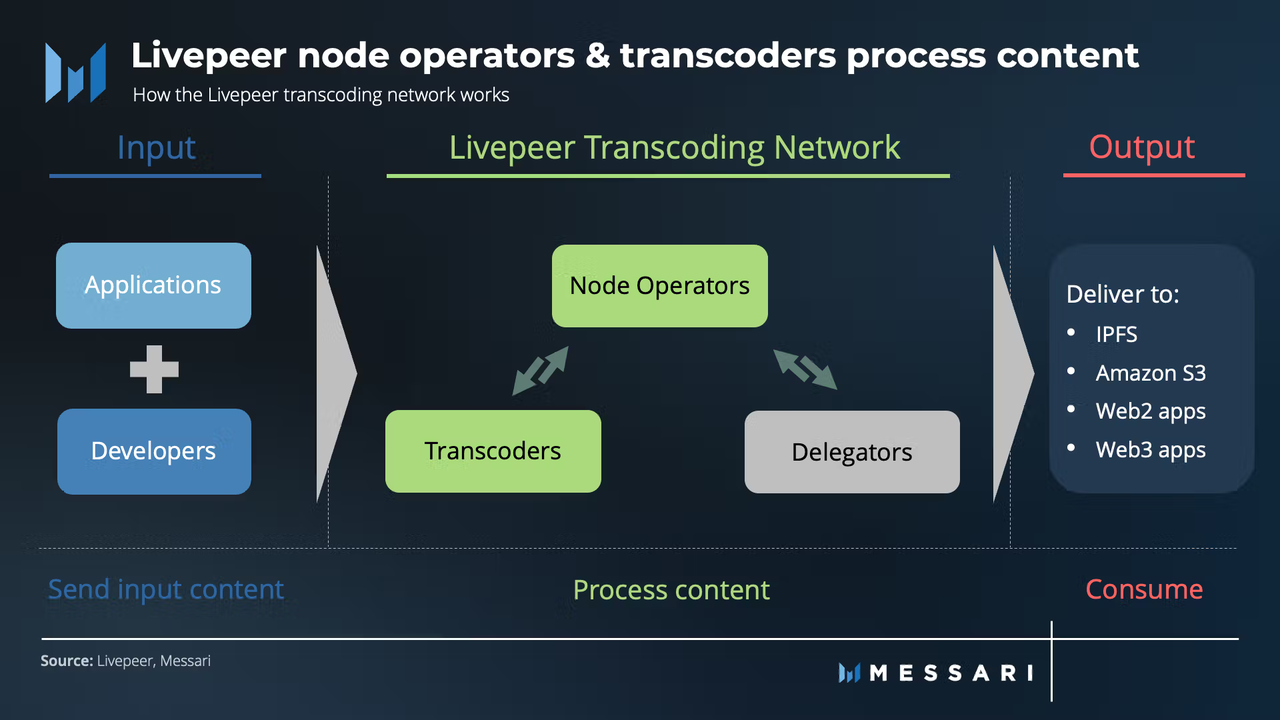

In this context, decentralized video infrastructure is gaining traction. Livepeer delivers video transcoding and real-time AI video processing for developers through an open network of GPU nodes. Unlike traditional cloud platforms, Livepeer prioritizes an open network architecture and market-driven resource coordination, steadily expanding into domains like AI Avatars, real-time AI video, and generative media.

What Is Livepeer’s Video Processing Network?

Livepeer is a decentralized video processing network built on Ethereum, purpose-built for video transcoding, live streaming, and AI-powered video computation.

On traditional platforms, video processing is centralized and handled by dedicated servers. In the Livepeer network, video tasks are distributed across multiple GPU nodes that collaboratively handle transcoding and AI video processing.

Image source: Messari

Image source: Messari

The network consists of the following key participants:

-

Gateway: Receives video requests and dispatches tasks

-

Orchestrator: Handles video transcoding and AI video processing

-

Delegator: Supports node operation by delegating LPT

-

GPU Node: Supplies actual computing power

LPT is the network's core coordination token, used for node staking and network incentives.

What Happens When a User Uploads a Video?

When a developer or application uploads a video, the task is first sent to the Gateway—the critical bridge between the application layer and the Livepeer network. The Gateway authenticates the video request and, based on network status, routes the task to the most suitable Orchestrator node.

Video tasks typically include:

-

Live video streams

-

Video-on-demand files

-

AI video processing requests

-

Real-time video inference tasks

The Gateway assigns tasks according to node performance, network load, and node reputation.

This dynamic approach enables Livepeer to efficiently allocate GPU resources across the network.

How Does the Gateway Distribute Video Tasks?

The Gateway’s primary function is to connect applications with the decentralized compute network.

Upon receiving a video request, the Gateway identifies an available Orchestrator and assigns the video processing task. To minimize latency, the Gateway prioritizes nodes with high stability and superior GPU performance.

Unlike the fixed server model of traditional video platforms, Livepeer’s task distribution resembles an open marketplace.

Nodes compete for processing opportunities, incentivizing high service quality and reliability.

Since Orchestrators must stake LPT, node reputation directly impacts their chances of receiving tasks.

How Does the Orchestrator Handle Video Transcoding?

The Orchestrator is the principal compute node within the Livepeer network.

When assigned a video task, the Orchestrator leverages its GPU resources to perform transcoding. This process includes adjusting resolution, converting video encoding formats, compressing files, and generating multi-bitrate outputs.

For example, a single livestream may require simultaneous generation of 480p, 720p, and 1080p streams to support diverse devices and network conditions.

As AI video demand grows, Orchestrators are also responsible for real-time AI video inference tasks, such as:

-

AI Avatar animation

-

Real-time style transfer

-

Video content recognition

-

AI video enhancement

These workloads typically require high-performance GPUs.

How Does the GPU Network Power AI Video Processing?

AI video workloads demand significantly more GPU computing power than traditional transcoding.

While conventional transcoding focuses on encoding and compression, real-time AI video involves model inference—such as real-time facial animation, AI-driven motion generation, video style transfer, and text-to-video synthesis.

These processes require continuous access to GPU resources, making low-latency compute capabilities essential for real-time AI video.

Livepeer’s open GPU node network delivers scalable video compute resources for developers.

Compared to centralized AI video platforms, Livepeer emphasizes open access and decentralized resource coordination.

How Does the Probabilistic Micropayment System Work?

Video processing often requires a high volume of microtransactions. Settling all payments directly on-chain would result in substantial Gas fees.

To address this, Livepeer utilizes a probabilistic micropayment system.

Under this model:

-

Users generate payment tickets in advance

-

Nodes process video tasks upon receiving tickets

-

A subset of tickets are randomly selected as winners

-

Winning tickets can be redeemed for the full payment amount

This system reduces the number of on-chain transactions while maintaining settlement efficiency.

Probabilistic micropayments are a cornerstone of Livepeer’s strategy to minimize on-chain payment costs.

Why Does LPT Staking Influence Task Allocation?

LPT is the central coordination token within the Livepeer network.

Orchestrators must stake LPT to participate in video task processing. Generally, the greater the LPT stake, the higher the likelihood of receiving tasks.

The mechanism serves several purposes:

-

Enhancing node stability

-

Strengthening network security

-

Mitigating malicious node risks

-

Incentivizing long-term participation

Delegators can support node operation by delegating LPT and share in network rewards.

Because task allocation is tied to node reputation, Orchestrators must maintain high uptime and deliver reliable video processing quality.

How Does Livepeer Differ from Traditional Video Cloud Platforms?

Livepeer’s most significant distinction from traditional video cloud platforms is its network architecture.

Traditional video services are managed by a single entity overseeing all servers and GPU resources. In contrast, Livepeer coordinates video processing via an open network of independent nodes.

| Comparison | Livepeer | Traditional Video Cloud Platform |

|---|---|---|

| Network Structure | Decentralized | Centralized |

| GPU Source | Open node network | Cloud service providers |

| Processing Model | Distributed task processing | Centralized processing |

| Payment System | On-chain coordination | Platform fees |

| AI Video Support | Real-time GPU network | Cloud GPU services |

As demand for AI video surges, GPU resources become increasingly vital, positioning decentralized video compute networks as a core pillar of Web3 infrastructure.

Summary

Livepeer has established a decentralized video processing network through its Gateway, Orchestrators, and GPU nodes. When users upload videos, the network automatically distributes tasks to GPU nodes for transcoding and AI video processing.

LPT serves as the backbone for node staking, task coordination, and security incentives, while the probabilistic micropayment system helps minimize on-chain payment costs.

With the rise of AI video, AI Avatars, and real-time media, Livepeer has evolved from a traditional transcoding platform to a real-time AI video infrastructure—emerging as a flagship project in the Web3 video compute ecosystem.

FAQs

How does Livepeer handle video transcoding?

Livepeer routes video tasks to Orchestrator nodes, which use GPU resources for video encoding, compression, and multi-resolution output.

Why does Livepeer require GPU nodes?

Video transcoding and AI video inference demand substantial GPU computing power. GPU nodes supply the essential compute resources for the network.

What is the probabilistic micropayment system?

Probabilistic micropayments reduce on-chain payment costs by using randomly winning tickets, thereby cutting the number of on-chain transactions.

What is the role of LPT in the network?

LPT is used for node staking, task coordination, network security, and the Delegator delegation system.

Is Livepeer considered AI infrastructure?

With the progression of real-time AI video and generative media, Livepeer is increasingly positioned as a core component of AI video infrastructure.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?